Overview

Split testing (commonly referred to as "A/B Testing") is a method of testing out which of 2 or more variants of a message produces better engagement with your recipients.

The variants can be different in subject line, content and/or timing. You can test simple things like whether one subject line is better than another in terms of open rates, and more complex questions such as whether (and by how much) a 10% discount outperforms a 5% discount.

With Remarkety, you can test both newsletters and automations. However, the process is a little bit different because of how these two campaign types behave. The details are explained below.

Testing Newsletters

The main concept of split-testing newsletters is that you test your variations on a small "sample group" to see which variation performs best, and then send that specific variation to the rest of the recipients. The test is concluded once the winning variation is out to everyone else.

Setup Variations

You can have test up to 3 variations per newsletter. To be effective, we recommend first setting up a good baseline and only then adding a variation, since the initial variation will be copied as-is from the baseline.

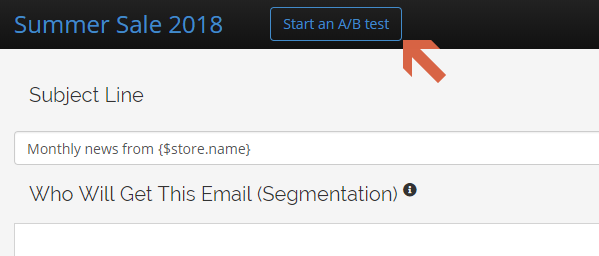

Once you've edited and designed your baseline variation, click on the "Start ad A/B test" button in the campaign editor:

...and you'll see the variation tabs. You can jump back and forth between the variations at any time when editing the campaign. The "Variation B" is initially created as a copy of Variation A, but later changes to A do not get automatically copied to B.

Go ahead and change anything you like - subject, "from" settings, content, etc. Mind that we only recommend making one significant change in a test - otherwise it will be difficult to tell why one variation worked better than another.

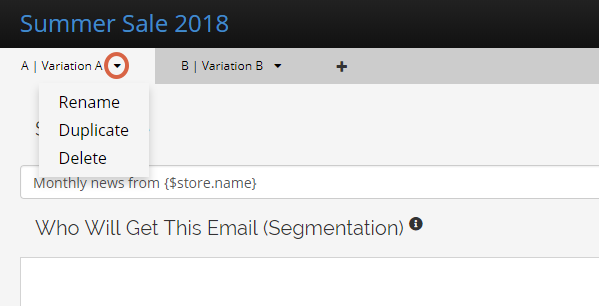

You can rename the variations so that you can easily recall what you were testing. Click on the variation's context menu to rename, duplicate or delete a variation.

Sending a Split Test

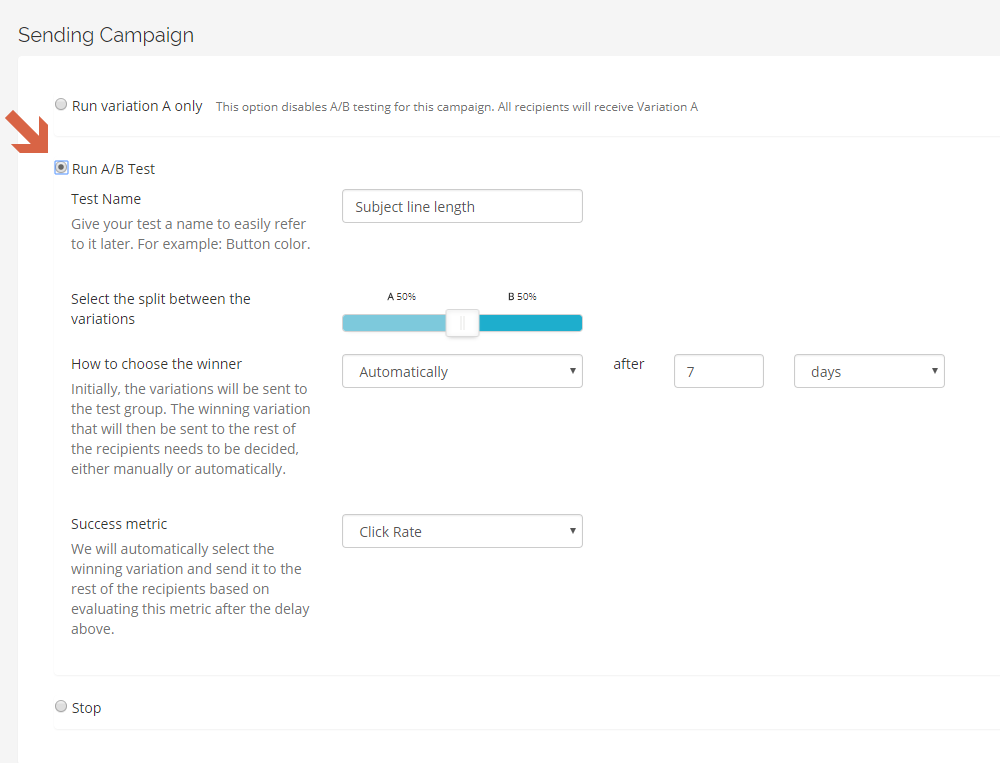

When you're ready to send your newsletter, go to the "Send Campaign" tab, where you'll see the Split Test settings.

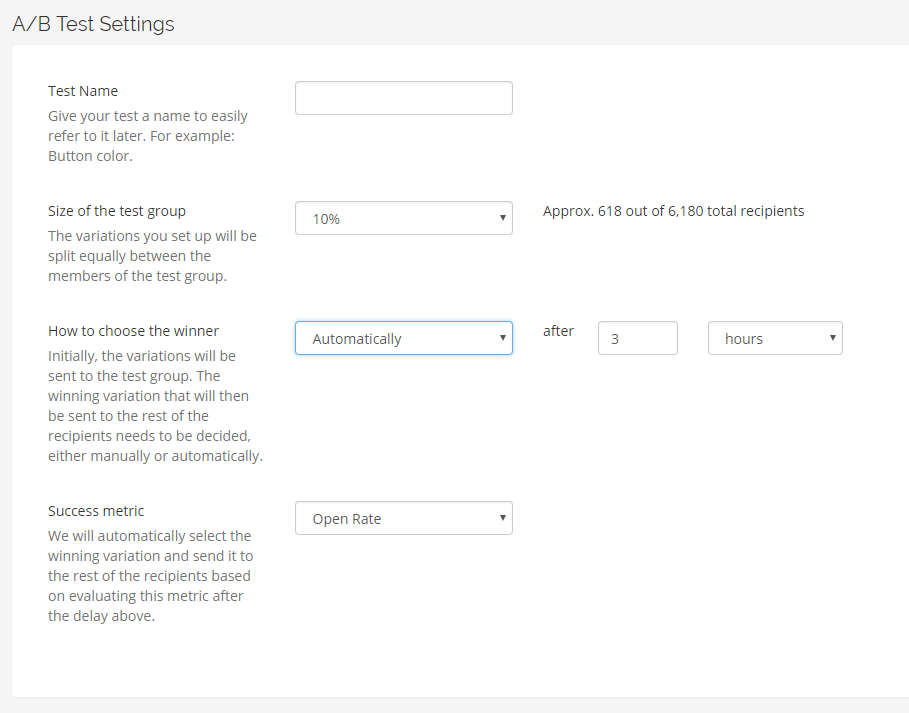

Test name: You can give your test an optional name (such as - "Button color" or "Serious vs funny subject") so that you can later easily reference what you were testing here.

Size of the test group: This is the percentage, out of the total potential recipients for this specific newsletter, that the variations will be sent out to. For example, if your total list size for this campaign is 100,000 recipients and you choose a group size of 10%, then the test group is 10,000 recipients and it will be split evenly between the variations (5,000 each for 2 variations, 3,333 for 3, etc). Note - the actual split will not be exact because we use random hashing to make sure that the groups are properly shuffled.

How to choose a winner: If you choose "Automatically", the winning variation will be sent out automatically (see below). If you choose "manually" here, it will be your responsibility to check in a while after the variations are sent to the test group, and then decide which variation to send to the rest. The winning variation will be sent out only when you manually select the winner. See "Reporting" below.

Success metric: If you let Remarkety automatically choose the winner above, define the success metric which will define the winner, and the time that should pass before a decision is made. We will send out the winning variation automatically.

Reporting

When a newsletter split test has started running, you can go to its report page and see the current stats for the test. As long as a winner hasn't been selected (either manually or automatically), you will see "Select a Winner" buttons under each variation. You can click on a winner of your choice at any time, even when the campaign is configured to pick a winner automatically. The winning variation will be sent to the rest of the group as soon as you pick a winner.

You can always go back and review the stats for split tests, even after the winner was selected.

Testing Automations

Testing automations is similar, but not equivalent to newsletters. Where newsletters are a "one-shot" affair, automations can be constantly tweaked and improved. Think of your existing automation as a baseline. You want to keep on testing changes and seeing if you can improve on the baseline. If you can, then the improvement becomes your new baseline. If not, just scrap the variation and return to the baseline. You can then start a new experiment and test another factor, and thus travel the road of continuous improvement with confidence.

Setup

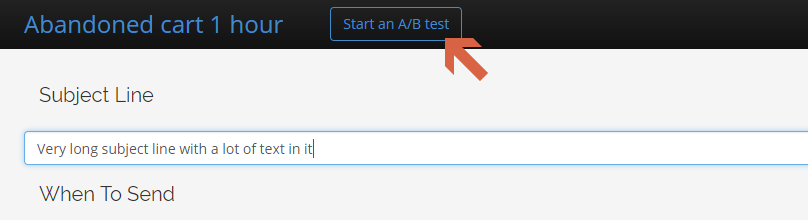

In any automation, click on the "Start An A/B Test" button to create a new variation:

The first variation will automatically be copied to the new one. Switch between the variations using the tabs, and modify anything (subject, content, timing and segmentation) in the new variations.

In the "Run Campaign" section, you can choose whether to continue sending out variation A (the baseline) to everyone, so that you can work on your variations without enabling them. Once you're ready to start testing them, choose "Run A/B Test":

Split testing is done differently in automations vs newsletters. In automations, you can manually configure how the variations will be distributed between the recipients. You can think of it as a probability - if you set an A/B split of 80/20, for example, then any recipient has an 80% chance to receive variation A and a 20% chance of receiving variation B. Over time, if the automation has been in split testing mode and has sent a 100 emails, roughly 80 emails would have been variation A's ans 20% variation B's.

Reporting and Choosing a Winner

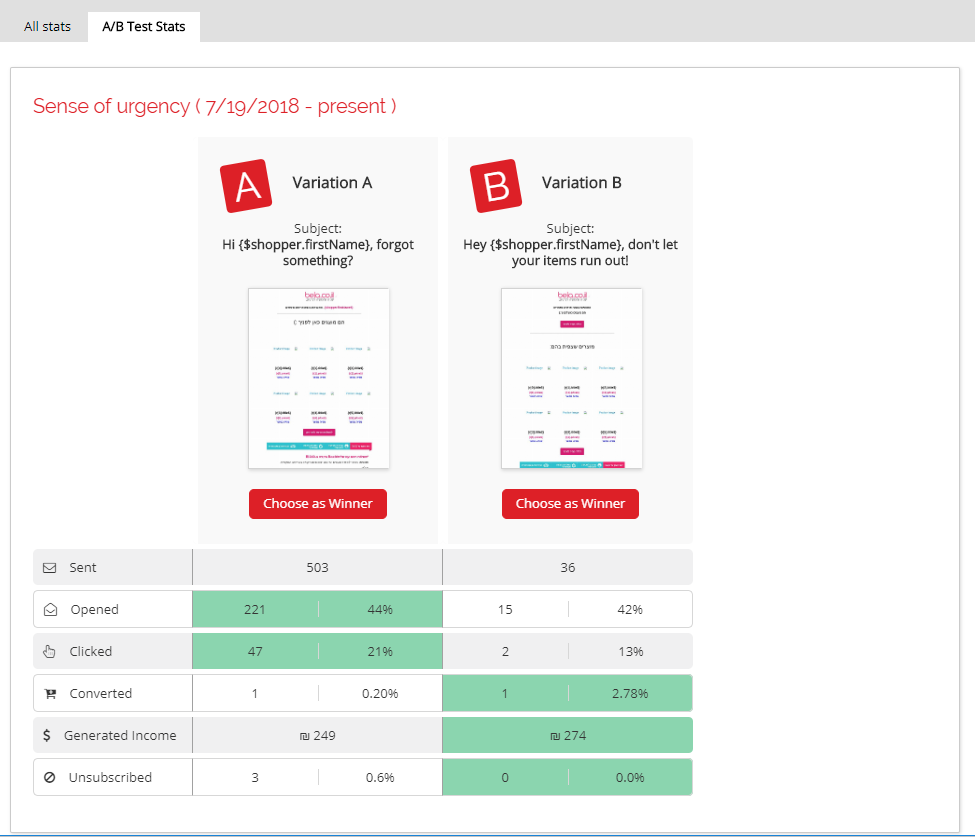

You can monitor the performance of the split testing on the campaign's reporting page for the campaign, under the A/B Test Stats tab:

Mind that in addition to the current split test, you also have access to all the previous tests you've run on this automation. This gives you - and your organization - a wealth of knowledge about what works and what doesn't over time, and you can constantly refer back to previous lessons learned.

As with newsletters, the winning variation can be chosen automatically or manually. Since automations tend to send much fewer emails than newsletters do, the recommended time to collect stats is much longer and spans days and weeks instead of hours.

If you choose to let Remarkety automatically select a winner, we will choose the winning variation based on the metric you defined. If there is not enough data at that point, we will automatically extend the test and notify you.

You can choose a winning variation manually at any point through on reports page by clicking "Choose as Winner" for the winning variation.

Once a winner is chosen (automatically or manually), the test is archived, the winning variation will be the new baseline, and will become the only variation shown in that campaign. Grab a coffee, you earned it ! When you come back, you can start testing another variation on the campaign to try and improve it even more.

Summary

Split (A/B) Testing should be a core tenant of your email strategy. By consistently testing and optimizing, and by having easy access to what worked and what didn't, you can continue figuring out what messaging works for your brand and audience, and continue improving your results!

We'd love to hear your thoughts about this feature, and if there's anything you'd like us to improve. Let us know by writing to: support@remarkety.com .

Comments

0 comments